Following up with this blog post, here is a quick overview of what is performed on Exadata Cloud at Customer nodes when a Grid Infrastructure patching is launched via the web interface, the Oracle Cloud control plane.

The whole patch process is not that different from the precheck process. Most of the steps are similar, and of course, the main difference is that the Opatch command actually … patches the Grid Infrastructure 😉

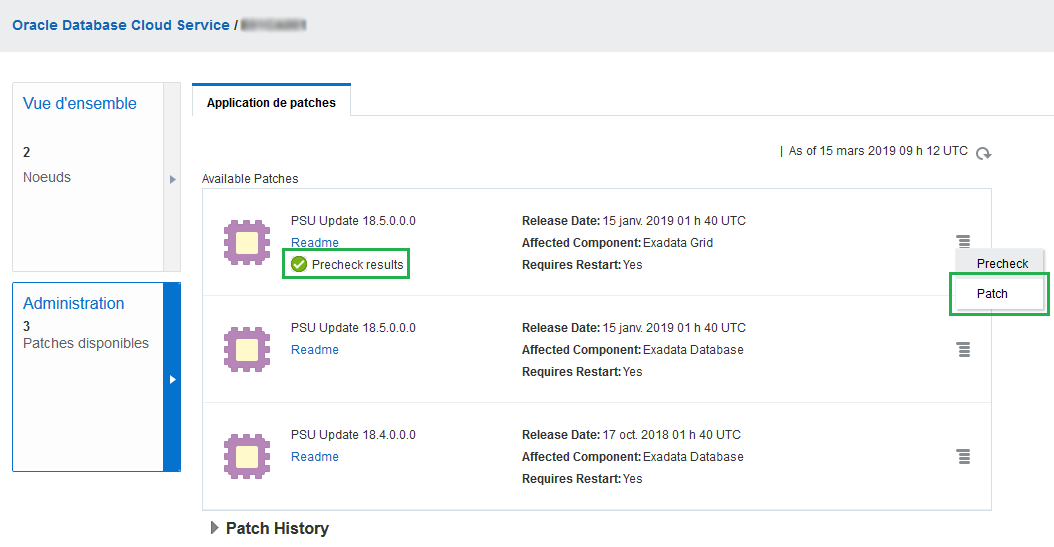

Let’s launch the patching process with a simple click on the Oracle Cloud control plane (sorry for the Fren-Glish screenshots …) :

When the patch is launched, once again the log file exadbcpatch.log in directory /var/opt/oracle/log/exadbcpatch/on both nodes contains all the performed steps. I used egrep to easily identify all the commands launched :

egrep 'Output from cmd|cmd took' exadbcpatch.log

The beginning of the process is the same as precheck : create staging directories, check Grid Infrastructure version, download and install latest Opatch, download patch, check Opatch version, check patch compatibility with Opatch.

Let’s follow the rest of the steps on the first node :

- A backup of the Grid Infrastructure Home is stored on an ACFS file system :

2019-03-27 16:06:04.292918 - INFO: making backup of /u01/app/18.1.0.0/grid

2019-03-27 16:06:04.293059 - INFO: Taking oracle home backup

2019-03-27 16:06:04.293403 - Output from cmd mkdir -p /u02/app_acfs/exapatch/node01; chmod 0775 /u02/app_acfs/exapatch/node01; rm -rf /u02/app_acfs/exapatch/node01/home_backup.suc;tar -zcpf /u02/app_acfs/exapatch/node01/OraGI18Home1.tar.gz -C /u01/app/18.1.0.0/grid . --exclude=network; chown -R oracle:oinstall /u02/app_acfs/exapatch/node01/OraGI18Home1.tar.gz; touch home_backup.suc run on localhost is:

[...]

2019-03-27 16:17:06.749519 - cmd took 662.455512046814 seconds

- Other files are backed-up in the same ACFS file system :

2019-03-27 16:17:06.749747 - INFO: Taking backup of files_to_keep

2019-03-27 16:17:06.750002 - Output from cmd cd /u02/app_acfs/exapatch/node01; mkdir OraGI18Home1_filestokeep; chown -R oracle:oinstall OraGI18Home1_filestokeep run on localhost is:

2019-03-27 16:17:06.758079 - cmd took 0.00771307945251465 seconds

2019-03-27 16:17:06.758331 - INFO: filestokeep is running

[...]

2019-03-27 16:17:06.763099 - INFO: Starting files_to_keep src_oh=/u01/app/18.1.0.0/grid dest_oh=/u02/app_acfs/exapatch/node01/OraGI18Home1_filestokeep

[...]

2019-03-27 16:17:07.653656 - INFO: Completed files_to_keep

Let’s see what is in /u02/app_acfs/exapatch/node01/OraGI18Home1_filestokeep directory :

[root@node01 exadbcpatch]# ll /u02/app_acfs/exapatch/node01/OraGI18Home1_filestokeep/*

/u02/app_acfs/exapatch/node01/OraGI18Home1_filestokeep/dbs:

total 20

-rw-rw---- 1 grid oinstall 1697 Mar 21 11:25 ab_+ASM1.dat

-rw-rw---- 1 grid oinstall 1544 Mar 21 11:26 hc_+APX1.dat

-rw-rw---- 1 grid oinstall 1544 Mar 21 11:26 hc_+ASM1.dat

-rw-r--r-- 1 grid oinstall 631 Mar 21 10:22 initbackuppfile.ora

-rw-r--r-- 1 grid oinstall 3079 May 14 2015 init.ora

/u02/app_acfs/exapatch/node01/OraGI18Home1_filestokeep/lib:

total 435368

-rw-r--r-- 1 grid oinstall 1841852 Apr 12 2017 libiomp5.so

-rw-r--r-- 1 grid oinstall 40165291 Apr 13 2017 libmkl_avx.so

-rw-r--r-- 1 grid oinstall 28137653 Apr 13 2017 libmkl_core.so

-rw-r--r-- 1 grid oinstall 30228742 Apr 13 2017 libmkl_def.so

-rw-r--r-- 1 grid oinstall 8932574 Apr 13 2017 libmkl_gf_ilp64.so

-rw-r--r-- 1 grid oinstall 9679729 Apr 13 2017 libmkl_gf_lp64.so

-rw-r--r-- 1 grid oinstall 18149432 Apr 13 2017 libmkl_gnu_thread.so

-rw-r--r-- 1 grid oinstall 8987353 Apr 13 2017 libmkl_intel_ilp64.so

-rw-r--r-- 1 grid oinstall 9734508 Apr 13 2017 libmkl_intel_lp64.so

-rw-r--r-- 1 grid oinstall 28634975 Apr 13 2017 libmkl_intel_thread.so

-rw-r--r-- 1 grid oinstall 37364034 Apr 13 2017 libmkl_mc3.so

-rw-r--r-- 1 grid oinstall 36067812 Apr 13 2017 libmkl_mc.so

-rw-r--r-- 1 grid oinstall 5472055 Apr 13 2017 libmkl_rt.so

-rw-r--r-- 1 grid oinstall 12981845 Apr 13 2017 libmkl_sequential.so

-rw-r--r-- 1 grid oinstall 11163969 Apr 13 2017 libmkl_vml_avx.so

-rw-r--r-- 1 grid oinstall 5455790 Apr 13 2017 libmkl_vml_def.so

-rw-r--r-- 1 grid oinstall 9520984 Apr 13 2017 libmkl_vml_mc2.so

-rw-r--r-- 1 grid oinstall 9720553 Apr 13 2017 libmkl_vml_mc3.so

-rw-r--r-- 1 grid oinstall 9536045 Apr 13 2017 libmkl_vml_mc.so

-rw-r--r-- 1 grid oinstall 95310549 Apr 9 2018 libopc.so

/u02/app_acfs/exapatch/node01/OraGI18Home1_filestokeep/sqlpatch:

total 52

drwxr-xr-x 3 grid oinstall 20480 Apr 20 2018 27676517

tnsnames.oraandsqlnet.oraare also backed-up under/u02/app_acfs/exapatch/node01/network_grid.ORG/network/admin.- Now that the setup phase is complete, the patching is launched (Since OPatch 12.2.0.1.5, option

-ocmrfis deprecated, but it is still used in this command) :

2019-03-27 16:17:19.696893 - INFO: Number of nodes which have grid - 2.

2019-03-27 16:17:19.697017 - INFO: grid version = 18.2.0.0.0

2019-03-27 16:17:19.697328 - Output from cmd /u01/app/18.1.0.0/grid/OPatch/opatchauto apply -inplace -ocmrf /var/opt/oracle/exapatch/ocm.rsp -oh /u01/app/18.1.0.0/grid /u02/exapatch/grid/28828717/28828717 2>&1 > /var/opt/oracle/log/exadbcpatch/applylog.326781 run on localhost is:

2019-03-27 16:26:35.730541 - cmd took 556.032791852951 seconds

[...]

2019-03-27 16:26:44.305878 - INFO: psu patch applied successfully on instance node01

We can see it is just a “regular” opatchauto performed, and the output looks really familiar :

[root@node01 exadbcpatch]# cat /var/opt/oracle/log/exadbcpatch/applylog.326781

[...]

Executing OPatch prereq operations to verify patch applicability on home /u01/app/18.1.0.0/grid

Patch applicability verified successfully on home /u01/app/18.1.0.0/grid

Bringing down CRS service on home /u01/app/18.1.0.0/grid

CRS service brought down successfully on home /u01/app/18.1.0.0/grid

Start applying binary patch on home /u01/app/18.1.0.0/grid

Binary patch applied successfully on home /u01/app/18.1.0.0/grid

Starting CRS service on home /u01/app/18.1.0.0/grid

CRS service started successfully on home /u01/app/18.1.0.0/grid

OPatchAuto successful.

[...]

OPatchauto session completed at Wed Mar 27 16:26:35 2019

Time taken to complete the session 9 minutes, 16 seconds

- After the clean-up step, the whole process is completed successfully on the first node. Of course, all these steps are then performed on the second node.

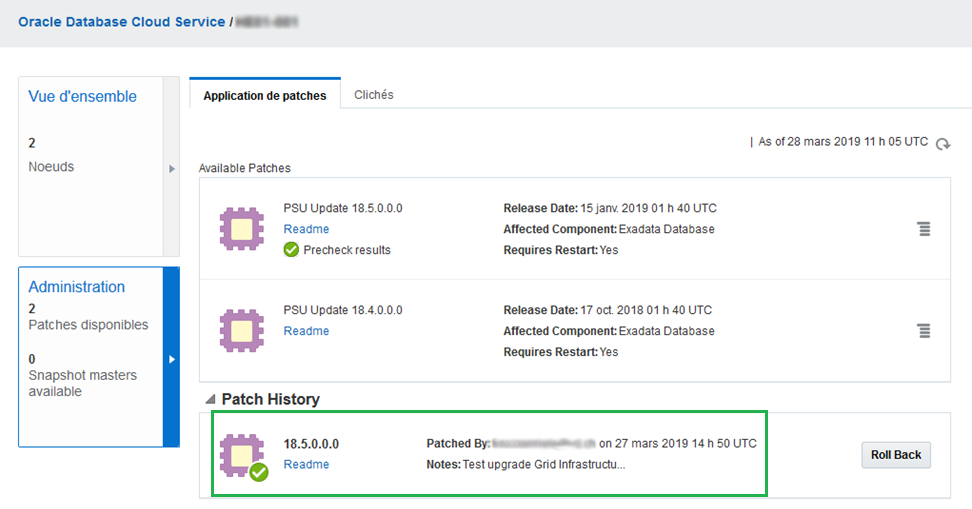

- Here is the final result in the Oracle Cloud control plane :

- And of course Opatch confirms :

[grid@node01 ~]$ opatch lspatches

28864607;ACFS RELEASE UPDATE 18.5.0.0.0 (28864607)

28864593;OCW RELEASE UPDATE 18.5.0.0.0 (28864593)

28822489;Database Release Update : 18.5.0.0.190115 (28822489)

28547619;TOMCAT RELEASE UPDATE 18.0.0.0.0 (28547619)

28435192;DBWLM RELEASE UPDATE 18.0.0.0.0 (28435192)

OPatch succeeded.

Although I am not a fan of having the Grid Infrastructure home path with version number, which quickly became outdated, the patching of Grid Infrastructure is made easy on Exadata Cloud at Customer. The whole process took aproximately 50 minutes and I did not encounter any problem. Just make sure that all the prerequisites are met (enough space on filesystems, correct Cloud Tooling configuration, compatible patch, etc …) before running a precheck or a patch.

Pingback: Exadata Cloud at Customer : Grid Infrastructure patch precheck under the hood – Floo Bar